When Your Static Archive Becomes a Video Goldmine—And When It Doesn’t

Last Updated on 19 March 2026

Most solo creators have a folder somewhere. It’s labeled “Old Content,” “Archive,” or maybe just “Misc.” Inside are hundreds of static images: product shots that never made the cut, screenshots from abandoned tutorials, B-roll photos from events, design mockups that clients rejected. For years, these files sat dormant, too good to delete, too static to reuse.

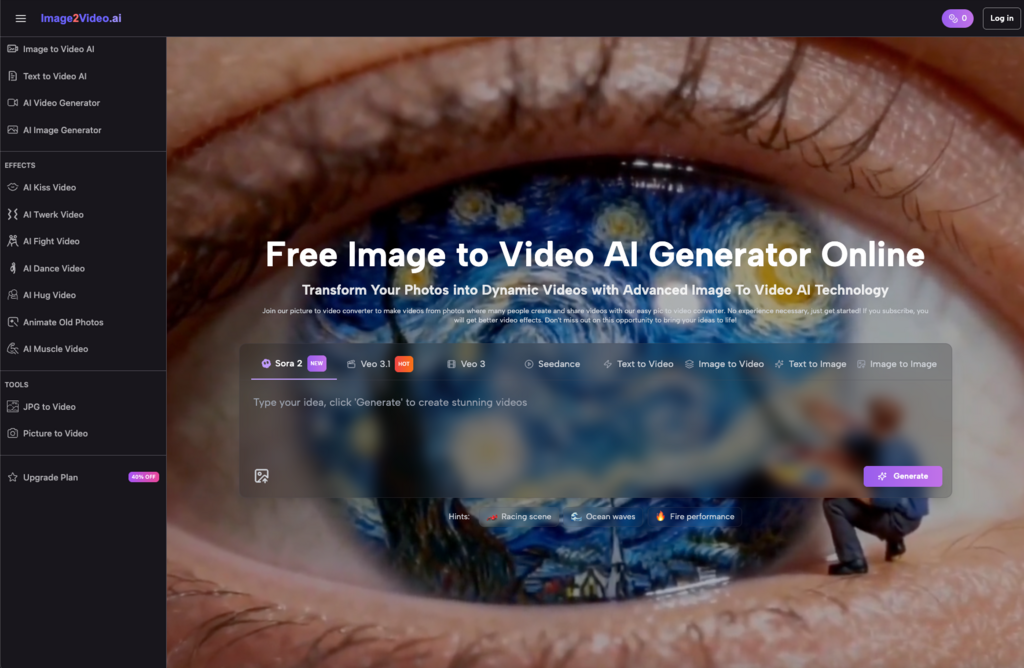

Then Image to Video AI tools arrived, promising to transform those frozen moments into dynamic, scroll-stopping videos. The pitch feels almost too perfect: upload a photo, click a button, watch your static assets come alive. No camera. No reshoots. No expensive motion graphics software.

But here’s what actually happens when you sit down to convert that first batch of photo to video.

The “Magic Button” Illusion Dissolves Quickly

The first upload is genuinely surprising. You drag a two-year-old product photo into an Image to Video AI generator, hit generate, and thirty seconds later, the image is moving. Not just a Ken Burns zoom—actual motion. The fabric ripples. The background breathes. The product rotates slightly, as if caught by an invisible camera operator.

That initial “wow” is real. It’s also misleading.

What solo creators discover within their first week is that motion without intention is just noise. The AI doesn’t know your narrative. It doesn’t understand that this product shot was meant to highlight durability, not create an ethereal, dreamlike atmosphere. It doesn’t recognize that your screenshot of a dashboard needs to guide the viewer’s eye to the “New Feature” button, not animate the entire interface into a distracting swirl.

The tool gives you motion. You still have to give it meaning.

This is the first expectation reset: Image to Video AI doesn’t replace creative direction—it shifts it. Instead of deciding how to animate, you’re deciding which of the AI’s interpretations serves your story. The work doesn’t disappear; it transforms into curation, prompting, and selection.

The Hidden Tax: Selection and Sequencing

Seasoned creators know that editing is where time actually goes. What surprises beginners using Photo to Video AI tools is that the selection process becomes more complex, not less.

When you work with static images, you choose one hero shot. With video, you need sequences. The AI might generate four variations of motion for a single photo. Each variation tells a slightly different story. One feels energetic. Another feels contemplative. A third looks cinematic but distracts from the product.

Suddenly, your decision matrix expands. You’re not just asking “Is this photo good?” You’re asking “Does this motion style match the three seconds before it? Does it create visual continuity with the clip after? Will this hold attention on a muted autoplay scroll?”

For creators repurposing archives, this creates an unexpected bottleneck. That folder of 200 static assets? It might yield 40 usable video clips. But sequencing those 40 clips into coherent 15-second narratives requires a different skill set than the original photography did. The Image to Video converter handles the technical transformation. The creator still handles the narrative architecture.

Quality Thresholds: Where the Tool Shines vs. Where It Struggles

Not all photos convert equally. This is the practical reality that rarely makes it into tool descriptions.

High-resolution, well-lit product photography with clear focal points tends to produce the most convincing results. The AI has texture to work with, clear edges to animate, and a subject that dominates the frame. These conversions often pass the “scroll test”—they look professional enough to stop a thumb mid-swipe.

Screenshots, text-heavy images, and low-contrast photography present different challenges. The AI may interpret text as texture, creating weird rippling effects across UI elements. It might animate background elements that should stay static, or fail to recognize that a logo needs to remain crisp while the scene around it moves.

Portrait photography sits in the middle. Converting a portrait to video using Image to Video technology can create stunning, almost magical results—subtle breathing, eye movement, hair shifting. But it can also veer into uncanny valley territory if the motion feels too pronounced or the facial features distort slightly.

Smart solo creators develop a filtering instinct within their first month. They learn to pre-sort their archives before uploading, identifying which photos have the structural qualities that AI motion enhances rather than complicates.

The Workflow Integration Reality Check

The most honest question a creator can ask after their first week with an Image to Video AI generator isn’t “Does this work?” It’s “Where does this actually fit in my existing process?”

For rapid content prototyping, the answer is immediate. Need to pitch a client on a video concept but only have static mockups? Converting photos to video creates a shareable preview in minutes. Need to populate a social calendar but lack fresh footage? Your photo archive becomes a renewable resource.

For final deliverables, the answer gets complicated. The motion is impressive for zero production cost, but it carries telltale signs. Subtle artifacts. Repetitive motion patterns. That slightly “too smooth” quality that signals AI generation rather than camera capture.

Experienced creators learn to deploy Image to Video AI strategically. They use it for:

- Concept testing: Validating that a visual story works before investing in proper video production

- Filler content: Maintaining posting frequency during gaps between primary video shoots

- Format adaptation: Converting horizontal product shots into vertical video formats for Stories or Reels

- Motion thumbnails: Creating animated previews that increase click-through rates on static content

They avoid using it for:

- Brand hero content where production value signals professional credibility

- Complex narratives requiring precise timing and emotional beats

- Client deliverables where “AI-generated” carries stigma or contractual complications

The Learning Curve Nobody Talks About

There’s a specific frustration that hits around day three of serious Image to Video AI experimentation. You’ve mastered the upload. You understand the interface. But your outputs still feel… generic.

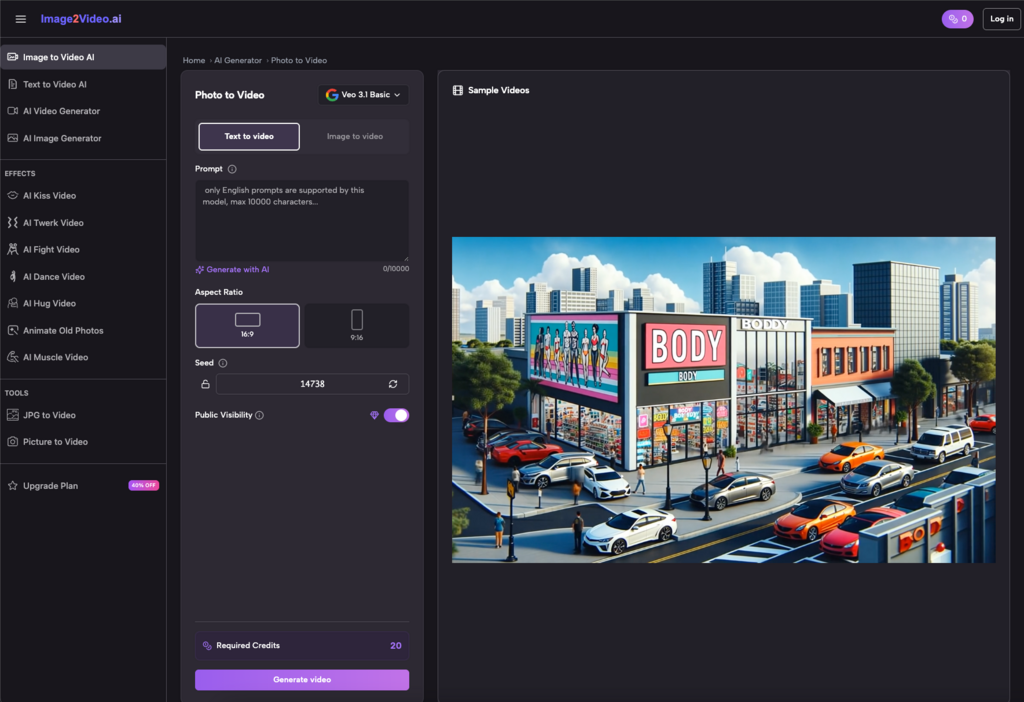

This is the prompting and curation learning curve. Early AI video tools offered limited control. Modern Photo to Video AI generators provide more parameters—motion intensity, camera movement direction, style references, duration controls. Learning which levers to adjust for different image types takes experimentation.

More importantly, creators must develop curation patience. The first generation rarely wins. The second might be closer. The third, with adjusted settings and a clearer intent, often hits the mark. But this iterative cycle contradicts the “instant transformation” marketing that draws people to these tools.

The creators who stick with Image to Video technology aren’t the ones who expect magic. They’re the ones who treat it like any other creative tool: something that yields better results with learned technique, clear intention, and realistic expectations about its role in the larger production chain.

When It Earns Its Place

After a month of realistic use, most solo creators reach a quiet conclusion. Photo to Video AI doesn’t revolutionize their workflow. It doesn’t replace cameras or make them video production experts overnight.

Instead, it solves a specific, valuable problem: motion content scarcity.

For creators sitting on years of static assets, the ability to convert photos to video transforms dead inventory into active content. It lowers the barrier to testing video formats. It provides optionality when time and budget constraints would otherwise force static-only output.

The tool earns its place not through magic, but through practical utility. It works best when creators approach it with archived assets, clear intentions, and the patience to curate rather than just generate.

Your “Misc” folder probably won’t become a Hollywood reel. But it might finally stop collecting digital dust—and start collecting views instead.