The Friction of Fast Visuals: Evaluating an AI Video Generator as a Starting Point

Last Updated on 26 March 2026

There is a specific kind of fatigue that solo creators know well. It usually hits midway through a project, right when a script or a concept is locked, but the visual assets required to bring it to life are either too expensive to license, too time-consuming to shoot, or simply trapped behind a lack of specialized design skills.

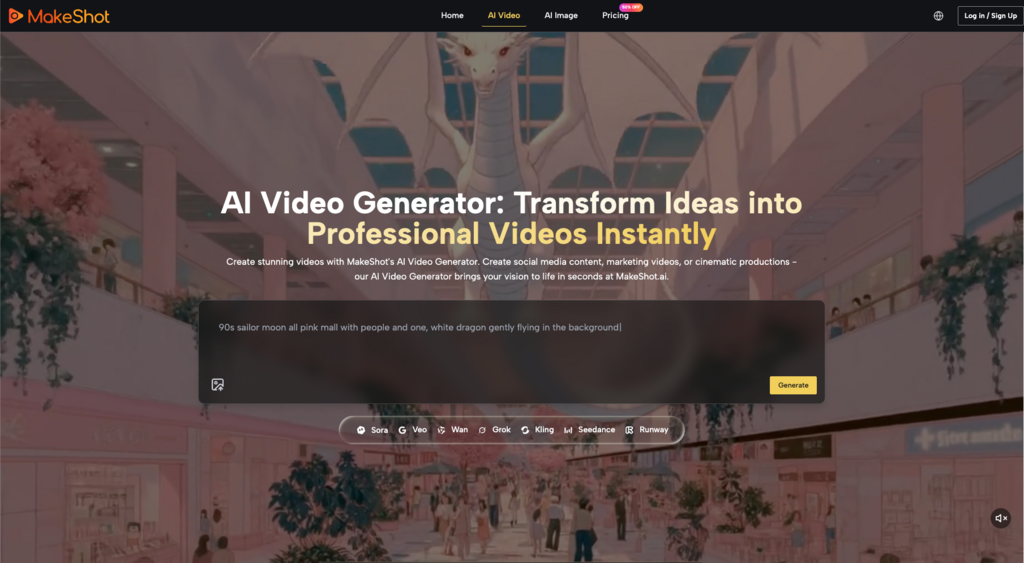

For the past couple of years, the proposed solution to this bottleneck has been rapid generation. The premise is straightforward: type a description, receive a visual. Platforms are increasingly aggregating different underlying technologies to serve as centralized hubs for this kind of work. MakeShot is positioned exactly in this space, framing itself as an all-in-one AI studio for generating professional videos and images easily, powered by a heavy-hitting trio of models: Veo 3, Sora 2, and Nano Banana.

Having access to multiple high-tier generation models in one platform sounds like an immediate workflow upgrade. But I’ve noticed that the initial appeal of these platforms rarely survives the first week of actual work without some serious recalibration. The reality of integrating an AI video generator into a daily production schedule is rarely as seamless as the marketing suggests. It requires a fundamental shift in how a creator approaches ideation, revision, and final execution.

The Gap Between the Prompt and the Usable Draft

When solo creators first attempt to replace manual ideation with AI generation, the friction usually appears almost immediately. The assumption is that the tool will act as a direct translator of thought to screen.

In practice, turning a rough idea into a visual starting point is less like giving instructions to a seasoned art director and more like negotiating with a highly capable but deeply literal machine. You might need a specific atmospheric shot for a video essay or a conceptual image for a product mockup. You feed the concept into the studio. The platform, leveraging engines like Sora 2 or Veo 3, will almost certainly return something visually striking within moments.

But what people often notice after a few tries is that the prompt is just the beginning of a negotiation, and the first output is rarely the final draft. The generated asset might have the right lighting but the wrong subject placement. It might capture the mood perfectly but introduce bizarre, unusable artifacts in the background.

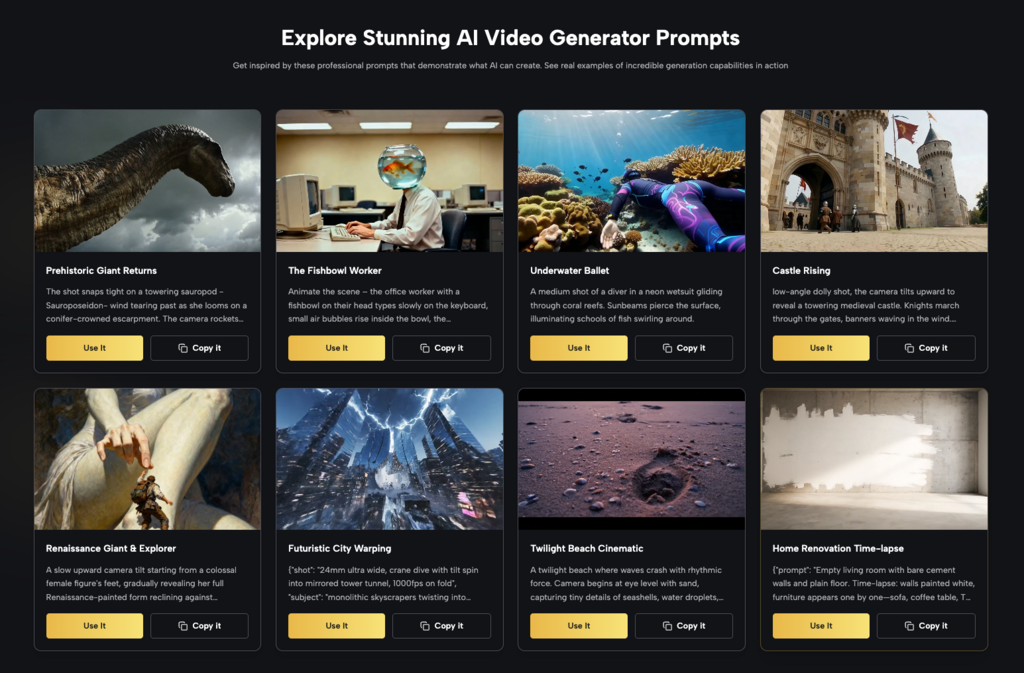

This is where beginners tend to misjudge the workflow. They expect the AI to deliver a finished product, and when it delivers an imperfect approximation, they view it as a failure of the tool. A more realistic approach is to view these generated visuals strictly as advanced starting points—mood boards that can move, or rough sketches that happen to be rendered in high definition.

Where Speed Actually Helps (And Where It Creates Work)

The core trade-off of any AI-assisted visual workflow is the exchange of manual creation time for curation and revision time.

The speed of a platform running models like Nano Banana or Veo 3 is undeniably useful for breaking the blank page syndrome. If you are staring at an empty timeline and need to establish a visual tone quickly, generating ten variations of a scene in a few minutes is a massive advantage. It allows a solo operator to test creative directions at a pace that manual illustration or stock footage searching simply cannot match. You can see what a concept looks like in neon cyberpunk lighting versus muted documentary style before committing to either.

However, that speed comes with a hidden tax. The part that usually takes longer than expected is coaxing a specific, nuanced adjustment out of a tool that prefers to generate entirely new concepts rather than tweak existing ones.

If a generated video clip is 90% perfect, getting that final 10% right—fixing a weird camera pan, correcting a subject’s movement, or altering a single color—often requires discarding the clip entirely and rolling the dice on a new prompt. The speed of initial generation is frequently offset by the time spent trying to force the AI to adhere to strict, pre-existing creative boundaries. The role of the creator shifts from a hands-on maker to a persistent curator, sifting through dozens of variations to find the one that requires the least amount of post-production triage.

What We Cannot Conclude From the Engine Room

It is easy to look at the underlying technology of a platform and make assumptions about its day-to-day utility. MakeShot explicitly states that it is powered by Veo 3, Sora 2, and Nano Banana. These are formidable names in the current generative landscape, implying a high ceiling for visual fidelity and complex motion.

However, knowing the engines powering the studio does not tell us how the car actually drives.

There is a hard limit to what can be concluded from the presence of these models alone. We cannot assume anything about the platform’s actual editing controls, its interface responsiveness, or how it handles the organization of hundreds of generated assets. We do not know if the platform allows for granular timeline adjustments, masking, or consistent character retention across multiple generations.

In my experience, a platform’s true usability for a solo creator is dictated entirely by how it bridges the gap between those raw models and the user’s practical needs. A powerful model trapped behind a clumsy interface or lacking basic workflow integrations is often more frustrating than a weaker model with excellent user controls. Until a creator actually attempts to push a project through the platform from concept to export, the commercial suitability of the output remains an open question.

The Shifting Baseline of “Pro” Quality

The promise of generating “pro videos and images easily” is a heavy one, largely because the definition of professional quality is a moving target.

When you first use a high-end generation tool, the sheer visual gloss of the output is usually enough to impress. A single, isolated clip of a sweeping landscape or a highly detailed portrait feels like a massive victory. But where the novelty wears off is usually around the third or fourth project, when the creator stops looking at the visual fidelity of a single frame and starts looking at the narrative cohesion of a sequence.

A stunning standalone clip is relatively easy to achieve. Three consecutive clips that look like they belong in the same universe, obey the same physics, and feature the same subjects are incredibly difficult.

The practical judgment here is that the evaluation criteria for these tools must evolve past the “wow” factor. The true test of an all-in-one AI studio isn’t whether it can produce one breathtaking image to post on social media. It is whether it allows a solo creator to reliably extract usable, consistent assets that fit into a larger, coherent project without demanding more time than traditional methods.

Deciding If It Belongs in Your Routine

Adopting a new visual workflow requires caution. It is risky to build an entire production schedule or base a client pitch around an untested generation tool before deeply understanding its specific quirks and limitations.

If you are evaluating MakeShot, or any platform aggregating heavy generative models, the most pragmatic approach is to test it against your lowest-stakes friction points first. Do not try to generate a final, polished commercial on day one. Instead, use it to generate the B-roll you couldn’t afford to shoot. Use it to create the conceptual backgrounds for a presentation. Use it to quickly visualize a storyboard so you can decide if a script is actually working.

I tend to advise treating these aggregators as high-powered sketching tools first and foremost. If the combination of Veo 3, Sora 2, and Nano Banana allows you to iterate your early-stage concepts faster than your current manual workflow, it holds genuine value. But if you find yourself endlessly re-prompting, fighting the tool for basic consistency, and spending hours trying to fix what the AI got wrong, the initial speed of generation is a false economy. The decision ultimately rests on whether the tool removes more bottlenecks than it creates.