Why AI Music Generator Tools Fit Modern Solo Creation

Last Updated on 18 March 2026

The creative bottleneck is no longer a lack of ideas. For many people, it is the gap between having an idea and being able to hear it. A writer may know the emotional temperature of a song before a single chord exists. A video creator may know the pacing of a scene before any soundtrack has been chosen. A small team may know the tone of a campaign before they have the budget or time to commission original music. That is where an AI Music Generator begins to matter. It does not make taste irrelevant, and it does not erase the value of real musical craft. What it does is shorten the distance between intention and first draft, which is often the hardest distance to cross.

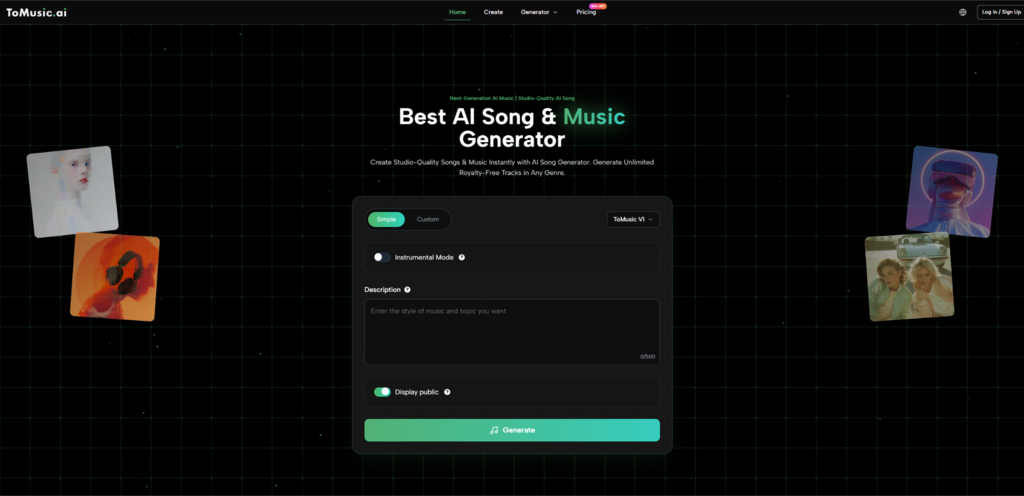

When I looked closely at ToMusic, what stood out was not only that it generates songs from prompts and lyrics, but that it frames music creation as a repeatable workflow rather than a one-click novelty. The platform presents multiple model versions, lyric-based generation, a music library that automatically stores outputs, and export options that make the result easier to move into real projects. In my view, that combination is what makes this category interesting. It suggests a shift from “AI music as spectacle” to “AI music as working material.” For solo creators especially, that distinction changes everything.

Why Solo Creators Need Faster First Drafts

The solo creator economy has changed what “music production” means. Many people who need music are not trying to become producers. They are trying to finish videos, build a brand, draft a concept, test an emotion, or package an idea quickly enough that the momentum does not disappear.

Why Time Pressure Shapes Creative Decisions

A single person running a channel, newsletter, short-form account, or indie product rarely has the luxury of a long production cycle. The work is continuous. One asset leads immediately to the next. In that context, music is often important but rarely isolated. It has to fit into a larger stack of decisions: script, visuals, editing, distribution, and timing.

For that kind of user, speed is not just convenience. It changes whether a creative experiment happens at all. In my observation, many ideas die because the effort required to prototype them feels too high. A promising lyric note stays in a draft folder. A mood for a trailer remains theoretical. A creator settles for stock music because the gap between original intent and execution feels too wide.

Why Draftability Matters More Than Perfection

This is why first drafts matter more than people admit. A rough but listenable version creates movement. It gives the creator something to react to. Once a sound exists, even if it is imperfect, the project becomes easier to shape. Without that sound, everything remains abstract.

ToMusic appears to serve that need by turning text and lyrics into complete songs rather than partial sketches. That matters because a full result is more useful for decision-making. A creator can judge pacing, emotional fit, vocal character, and overall impact much more easily from a complete piece than from a fragment.

How ToMusic Reframes Music As A Language Task

A major change in AI creativity tools is that they increasingly treat language as the main interface. Instead of asking the user to manipulate complex production software from the beginning, the system asks them to describe intent.

How Prompt-Based Music Lowers Entry Friction

ToMusic’s structure is built around text input and lyric input. That sounds obvious on the surface, but it reflects a larger design philosophy. The user is not required to begin with theory, notation, or DAW fluency. They can begin with a brief, a scene, an atmosphere, or a written lyric.

That design matters because a lot of creative people already think in language. They know how to describe “melancholic but not slow,” “warm and reflective,” “a female vocal with a soft rise in the chorus,” or “something that feels intimate rather than cinematic.” In traditional workflows, those descriptions might not be enough to produce audio. In this workflow, they become the starting point.

Why Multiple Models Make The Platform More Flexible

The platform also highlights multiple model versions, from V1 to V4. In practice, this suggests that generation is not treated as a single uniform process. Different models appear to be positioned around different trade-offs, with the pricing page describing V1 and V2 as standard quality, V3 as stronger in harmonies and rhythms, and V4 as the flagship model with the best vocals.

That is important because music is never one-dimensional. Some users care about speed. Some care about fuller arrangements. Some need better vocals. Some are testing broad ideas and do not need the most advanced mode every time. A multi-model structure makes the workflow feel more strategic and less random.

How Lyrics To Music AI Expands Who Can Finish Songs

There is a big difference between having something to say and knowing how to arrange it musically. Many people can write words they care about. Far fewer can transform those words into a complete song on their own.

Why Lyrics Often Need A Missing Second Half

Lyrics are often emotionally finished before they are musically usable. Someone may write a strong chorus, a confessional verse, or a memorable hook, but still have no melody, no arrangement, and no vocal structure. In the old model, the next step required either technical production ability or outside collaboration.

That is why Lyrics to Music AI is more meaningful than it first appears. It does not merely save effort. It expands authorship. It allows people who can already write language, but not compose in traditional ways, to hear their ideas in song form.

How Musical Interpretation Changes Meaning

The same lyric can sound fragile, ironic, triumphant, playful, or devastating depending on the musical frame around it. This is why lyric-based generation is not only about automation. It is about interpretation. The platform has to decide how words move through rhythm, where emphasis lands, what kind of phrasing supports the text, and how the arrangement reinforces tone.

In my experience with tools in this space, this is where the strongest outputs tend to reveal themselves. A weak result feels like words pasted onto a backing track. A stronger result feels like the music understands the lyric’s posture. ToMusic positions itself as capable of transforming written lyrics into complete songs with realistic vocal performances, and that is exactly the kind of promise that matters for lyric-driven users.

Where This Helps Most In Practice

The most obvious beneficiaries are not only aspiring musicians. They also include social creators, marketers, storytellers, and solo founders who need original musical material with a clear emotional direction. A personal poem can become a musical test. A product launch line can become a jingle draft. A spoken concept can become a mood piece for video. The value is not simply “a song exists.” The value is that the song becomes available early enough to affect the rest of the project.

What The Actual Workflow Looks Like

The front-end process appears intentionally simple. The complexity sits behind the interface, while the visible steps remain manageable for non-specialists.

Step One Starts With Text Or Lyrics

The user begins by entering either a text prompt or a set of lyrics. This is the instruction layer. It defines mood, style, pacing, and verbal content. On the generator page, the interface shows fields such as title, styles, genre, moods, voices, tempos, and lyrics, which suggests the prompt can be broad or more directed depending on how much control the user wants.

Step Two Selects The Generation Setup

The next part is choosing the model and any visible settings tied to the output. This matters because the platform supports multiple versions rather than one single generation mode. The user is not just sending text into a black box. They are deciding how they want the system to interpret the task.

Step Three Generates The Song

Once the input and generation setup are in place, the system produces the song. This is where the platform’s promise of speed becomes practical. A creator can move from concept to audible result without stopping to build every layer manually.

Step Four Stores And Exports The Result

After generation, the track moves into the Music Library. According to the library page, it automatically saves all generated songs and stores metadata such as titles, tags, descriptions, lyrics, and generation parameters. That stored structure changes the platform from a simple generator into a reusable workspace. The pricing and library pages also indicate export options such as WAV and MP3 downloads, along with stem extraction and vocal removal in supported plans.

Why The Music Library Is More Important Than It Sounds

People often focus only on the generation moment, but long-term usefulness depends on what happens afterward. If a tool creates audio but gives the user no reliable way to organize and retrieve it, the tool becomes harder to use over time.

Why Retrieval Matters For Repeat Creation

A solo creator may generate multiple ideas for the same project. One version works for a teaser. Another fits a behind-the-scenes clip. Another becomes a reference for future work. If those outputs are scattered, the workflow breaks down. A library with searchable metadata solves a real problem: the user can revisit old ideas instead of recreating them from scratch.

How Stored Parameters Support Iteration

The fact that tracks are saved with generation parameters is especially useful. It means users can look back not only at the audio but also at what shaped it. In creative iteration, that kind of memory matters. It helps people understand which prompts, moods, or lyric structures led to stronger results.

What Makes This Different From Casual Music Toys

A lot of AI music products look similar on first contact, but the meaningful differences appear once you think about repeated use.

Dimension | Basic Music Toy | ToMusic Workflow

Starting input | Usually short generic prompt | Prompt or full custom lyrics

Model choice | Often one default path | Multiple models from V1 to V4

Output goal | Quick entertainment value | Full songs for real use cases

Library support | Often minimal | Automatic library with metadata

File handling | Basic export or none | WAV, MP3, stems, vocal removal

Repeat usage | Low continuity | Built for ongoing creative cycles

The table is useful because it shows that the issue is not whether both tools can generate music. The issue is whether the generated output can be folded into a larger process. ToMusic looks more aligned with users who return often, compare versions, and treat output as part of a working archive.

Where The Platform Fits Best

The most realistic way to evaluate a tool like this is not to ask whether it can replace all musicians. That question is too broad and not very helpful. A better question is where it delivers the most practical value.

For Content Creators With Constant Publishing Needs

Creators who publish frequently often need music that is directionally right more than historically perfect. They need something that supports tone, speeds up editing, and keeps a consistent atmosphere across pieces. AI generation helps because it turns musical briefing into a faster and more repeatable action.

For Writers Who Want To Hear Their Words

A lyric or poem changes once it becomes audible. Sometimes the transformation reveals strengths. Sometimes it reveals awkward phrasing that was invisible on the page. Either way, hearing the words in a musical environment helps the writer make stronger choices.

For Small Teams Testing Ideas Before Spending More

Early-stage teams often work with uncertainty. They do not want to commit large budgets to every idea before they know it deserves expansion. A generator-based workflow allows them to test tone and musical direction early, then decide whether the result is enough on its own or should be developed further.

Why This Matters For People Working Alone

The solo creator is often editor, strategist, writer, and producer at the same time. Any tool that reduces one category of friction has outsized value. In that context, music generation is not only about sound. It is about preserving momentum.

What The Limits Still Are

A useful article should not pretend the system is perfect. The value becomes more credible when the boundaries are clear.

Prompt Quality Still Shapes Outcome

Better instructions usually lead to better results. If the prompt is vague, the song may sound broad or generic. Users still need some ability to describe what they want with clarity.

Iteration Is Usually Part Of The Process

In my observation, strong outputs often come after refinement. A first pass may establish direction, while later passes improve fit. That is normal. It is less like pressing a magic button and more like a fast sketching loop.

Taste Still Matters More Than Speed

Generation can accelerate production, but it does not automatically create memorable work. Someone still has to choose what feels honest, what fits the project, and what deserves to be kept.

Why The Shift Still Feels Important

Even with those limits, the broader shift is significant. Tools like ToMusic are changing music creation from a specialist-only workflow into a language-led creative process. For solo creators, that is not a small convenience. It is a structural change. It means more ideas can survive long enough to become something real, and in many creative careers, that is exactly where progress begins.